Scraping Data

The main target of my web-scraping was the digital archive of the Jangseogak Royal Library, a major digitaization project undertaken by the Academy of Korean Studies since 1999. This digital archive is a collection of various databases separated by source type and provenance, including the Jangseogak Library Old Documents DB, Old Books & Documents DB, Folklore and Oral Literature Recording DB, Korean Culture Photo DB. Most pertinent to my project on the military garrisons was the first of these databases, which alone consists of 2,560,000 image files of 12,205 books and text files of 4,195 books in 1,234 categories. From this vast ocean of data, I was most interested in obtaining the Records of the Central Military Garrisons, a compilation of military logs that hold special value for my dissertation project.

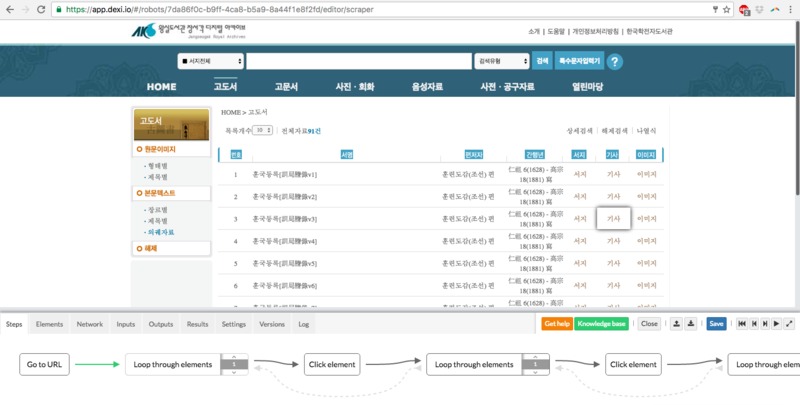

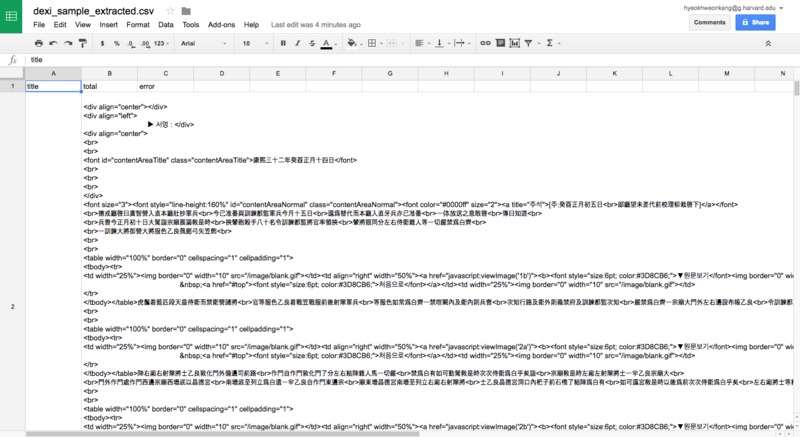

The critical challenge of web-scraping this source was to navigate the deep hierarchical structure of the database, which in simple outlines is as follows: type of garrison record, individual book, individual entry. The challenge was compounded by the fact that there were as many as 11 different types of garrison records and more than 550 books in total, and that the entries therein were separated into various sub-pages on the website. In other words, the scraper had to be instructed to loop through cycles of clicking into the deepest level, extracting the content and returning to the starting level.

Thankfully, this complex process was facilitated by Dexi, which had an easy user interface to automate clicks (to move between levels), and set up loops. I was then able to train a "robot" or "extractor" that would glide through each book and extract each entry embedded in it. However, while the scraper performed successfully for several hundred entries, the amount of data requests sent by the scraper soon overloaded the website until it crashed.

Realizing that this method was unsustainable, I then turned to Outwit Hub, which provided novel solutions for this second challenge. Although its user interface was less intuitive than Dexi, this software was helpful in that it provided a combination of macros and workflow scheduling that could break the scraping job into smaller, and more mangeable chunks. I could set up parameters such as periodicity, randomizing and max execution time, all of which ensured that my job would not overwhelm the host server.

The results of my scrapping are yet incomplete: reducing the workflow allowed for more sustainable methods of scraping, but elongated the extraction process to weeks and possibly months. However, the advantage of having built these scrappers is that they allow me the ability to update and modify my data in accordance with changes in the digital archive. This is crucial given that the digital archive is being actively updated and expanded.